Teams looking for the next step in infrastructure acceleration: artificial intelligence (AI) that takes actions autonomously, helping to design environments, create or update infrastructure-as-code, and adjust after the initial deployment.

However, the risks associated with AI are very real, including the possibility of a machine learning algorithm misconfiguring production, violating compliance rules, or causing a substantial overrun of budget at an alarming rate. Each of these risks directly maps to a class of policy controls, such as location allow-lists, mandatory encryption, and cost thresholds, that can be evaluated automatically before changes are applied.

With a control system such as Quali Torque, automated cloud modifications can be reviewed before they go live by leveraging policy-as-code governance and intelligent automation.

In this post, we’ll discuss why manual governance can fail in enterprise IaC, how policy-as-code governance integrates into day-to-day development processes, and see how Quali Torque and Quali DevOps Agent constitute an agentic AI for Infrastructure as Code (IaC) system.

Where you are today: IaC at enterprise scale

Infrastructure-as-code deployments are frequently managed across numerous repositories and a multitude of pipelines. It contains a complex blend of:

- Shared Terraform/OpenTofu modules for networks, IAM, clusters, databases, logging, etc.

- Helm charts and Kubernetes manifests for applications.

- Various build and deployment processes.

Decision-making processes are frequently scattered across various places:

- Wiki pages (“only approved regions for deployments”).

- Tribal knowledge (“prod requires encryption and team label assignments”).

- Hero reviewers (“ask Alex to sanity-check the plan”).

This system may only function adequately when humans are the sole users. As you increase build volume, for instance, when using short-lived dev/test environments, per-pull-request build servers, or more frequent builds, the build process becomes more challenging to maintain. However, granting human-like privileges to a non-human entity, specifically an AI agent, raises further problems.

Manual reviews don’t scale

Even well-designed manual approval mechanisms can’t keep up when Terraform plans and Helm diffs scale in volume and frequency. Approval doesn’t safeguard against drifts, nor does it ensure that decisions are applied consistently across departments.

When you design your system based on “people remembering the rules,” AI agents will not take safe actions either. Because agents work on inputs (e.g., “create an environment”), and they could violate assumptions (e.g., “deployments only in eu-central-1 with encryption”).

For this purpose, you can use a policy as input to an automated decision-making system that must consistently answer the question, “Is this action allowed?” before you let an agent create or modify infrastructure.

This is policy-as-code management. Instead of relying on implicit human judgment, every infrastructure action, whether initiated by a person or an AI agent, is explicitly evaluated against codified rules that are designed to prevent these failure modes.

What policy‑as‑code management actually means for you

Policy as code means you write the security, the compliance, the cost and the operational rules, as code that runs automatically whenever you make infrastructure decisions.

The table below shows how common “rules in a doc” translate into enforceable controls.

| Human rule in a doc | Enforced rule in policy-as-code |

| We don’t deploy public buckets. | Deny any plan that creates a public bucket. |

| All environments must be tagged. | Block launches unless cost center + owner tags are present (and valid). |

Three traits make policy‑as‑code work in the real world:

- Policies are versioned and tested: All policies are kept in a Git repository, which developers can modify through pull requests and test in the same way as regular code.

- Policies run at decision points: Rules are enforced before any change reaches production, enabling you to catch violations before they occur rather than detecting them afterwards in real systems.

- Policies return clear outcomes: A well-designed policy system provides clear decisions, allow/deny/warn/require approval, with reasons.

There are various platforms and tools to implement this approach, such as Open Policy Agent (OPA), Conftest, Terraform Sentinel, and Kubernetes admission controls (e.g., Gatekeeper/Kyverno). The specific tech varies, but the principle stays the same: Every change, human or AI, must pass automated checks before it’s applied.

What agentic AI for IaC really is (and why it needs guardrails)

While GenAI focuses on responding to prompts with output, an agentic AI is a system designed to perform actions. In infrastructure terms, an agent can:

- Observe state and signals (desired state, current state, telemetry, diffs).

- Determine which elements need changes.

- Propose a change (PR, plan, blueprint).

- Execute actions for applying changes, creating or deleting resources.

This is powerful, and risky, because agents are fast, persistent, and confident. A single wrong assumption can create various system problems, leading to expensive cloud billing costs.

Your main objective is not “complete freedom of action.” Your goal is to achieve “constrained autonomy.” The agent moves quickly inside the box and is blocked or escalated when it tries to exceed its limits. You need an enforcement layer between the agent and cloud to achieve this goal. This layer acts as a safety buffer, translating high-level organizational intent into concrete, machine-enforceable constraints that agents cannot bypass.

How Quali Torque and Quali DevOps Agent implement policy‑as‑code management for agentic IaC

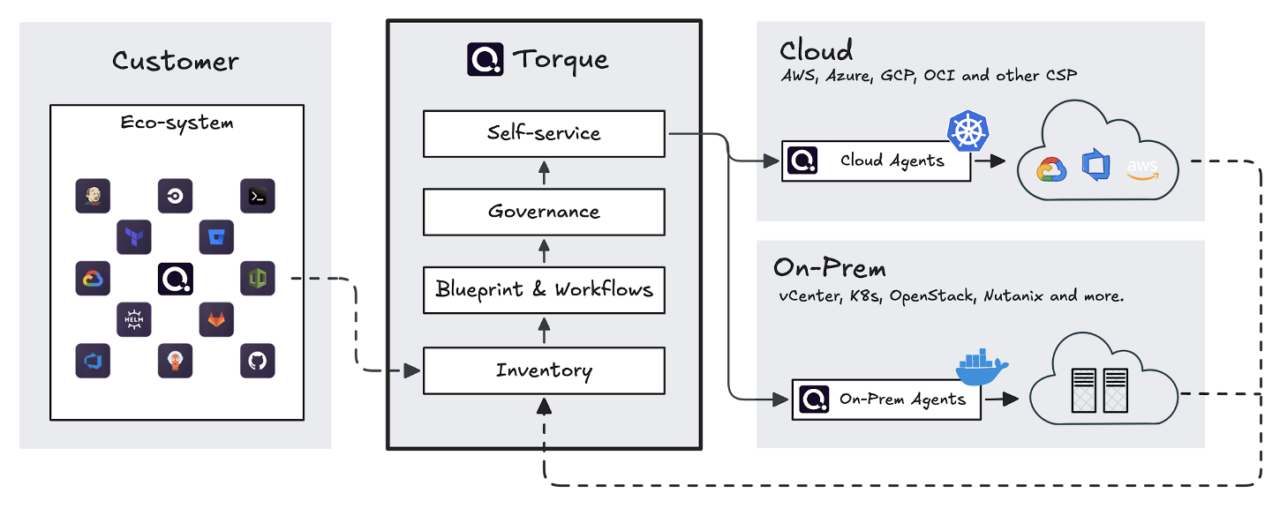

The safest way to govern both humans and agents is to route environment actions through a single control plane. That’s the design idea behind Quali Torque.

Quali Torque is positioned as an Environment‑as‑a‑Service control plane that sits on top of your existing IaC and cloud providers. It helps you turn Terraform/OpenTofu, Helm, and other assets into reusable environment blueprints and publish them to a self‑service catalog. Environments launched from that catalog can be tracked through their lifecycle and terminated when they’re no longer needed.

Figure 1: Quali Torque architecture

This lifecycle enforcement matters because Torque becomes the “choke point” where governance can be applied consistently, no matter who initiated the action. Whether the request comes from a developer clicking “launch” or an AI agent attempting to optimize infrastructure, the same policies are evaluated in the same way.

OPA-powered policy-as-code in Torque

Torque policies are powered by Open Policy Agent (OPA) and evaluated as part of the environment deployment pipeline at key moments:

- Before launch (consumption policies).

- At launch and extend (environment lifecycle policies).

- During Terraform plan evaluation (Terraform evaluation policies).

You’re not just enforcing “who can click launch.” You can enforce “what will actually be deployed” by evaluating the Terraform plan output.

Torque policies are written in Rego and can be applied at the account level or per space/team. Policies return explicit decisions, approved, denied, or requiring manual approval, with reasoning that explains why those decisions were made.

The common guardrails you can encode map directly to enterprise pain:

- Duration limits prevent environments from running indefinitely.

- Concurrency limits cap the number of active environments per user or team.

- Cost limits block launches that exceed a defined threshold.

- Location/instance allow-lists restrict regions or instance types.

- Hygiene requirements require tags, enforce encryption defaults, or block disallowed network patterns.

- Approval policies require human review for high-risk environments or changes.

As policies are code, you can treat them like any other shared platform asset: Version, review, test, and roll them out safely. This makes governance predictable and auditable, even as the number of automated actions increases. If you want sample policy templates, Quali maintains built-in policy templates in GitHub.

Where the “agentic” part fits: Quali’s DevOps Agent

Quali DevOps Agent functions as an agentic AI system that performs automated infrastructure tasks and provides environmental data to users. It enables users to create blueprints via natural language input, reduces ticket requests through self-service functionality, and shortens troubleshooting time by displaying provisioning information.

The main safety feature is built into the system design because the agent uses Quali Torque to execute its operations, which pass through the OPA-backed policy engine that protects all users.

A simple model to aim for: Propose → evaluate → enforce, which works like this:

- The agent proposes a blueprint change or executing a lifecycle operation — launch/scale/teardown.

- Torque evaluates the request through relevant security policies.

- Torque provides a decision: deny, approve (continue) or require approval.

- Agentic AI receives the decision and uses it as feedback to fix, iterate, and retry, like changing the region, reducing duration, or adding required tags.

For example, imagine an AI agent attempting to launch a new test environment in an unapproved region with an oversized instance type. The agent proposes the change, Torque evaluates the resulting Terraform plan against location and cost policies, and the request is automatically denied with a clear explanation. The agent can then adjust the region or instance size and retry without ever putting production or budgets at risk.

Conclusion: Agentic AI is safe when policy-as-code management is implemented

If you are serious about letting agents physically touch your infrastructure, do not rely on references to documentation or claims that “you told the agent your standards.” Put policy as code in the middle and make it the final authority. In other words, turn AI from a governance risk into a force multiplier: Platform and security engineers codify and evolve policies, and agents handle repetitive infrastructure tasks inside those boundaries.

Quali Torque environments are started up from reusable blueprints, and policies are evaluated by Open Policy Agent at lifecycle checkpoints. Quali DevOps Agent runs within the guardrails of your system while designing, recommending and applying changes. If you are looking to explore what self-service environments with OPA-backed guardrails are like, play around with the Quali Torque Playground or start a 30-day trial of Quali Torque.