GenAI is genuinely transforming infrastructure operations. It can generate Terraform configs in seconds, provision environments on demand, and put self-service infrastructure within reach of developers who’ve never written a line of IaC in their lives. The productivity gains are real.

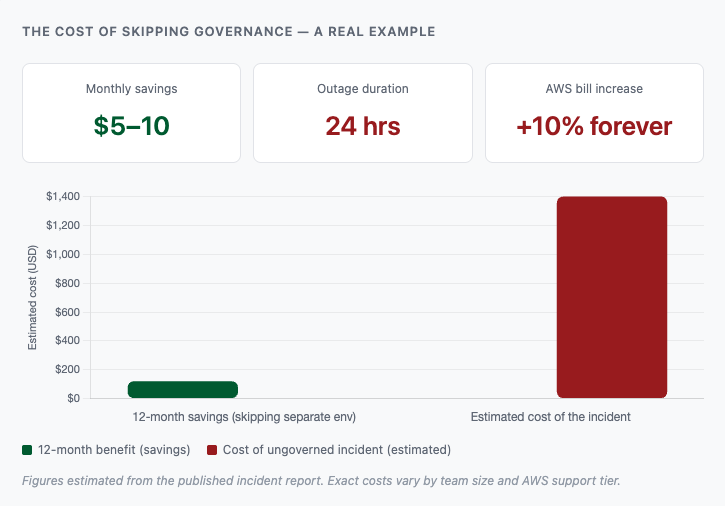

But there’s a version of this story that doesn’t end well, and it’s not hypothetical. We recently broke down exactly how it unfolds: a capable AI agent, a missing governance layer, and 2.5 years of production data gone in a single session. The agent did nothing wrong. The conditions surrounding it were the problem.

That’s the part most teams aren’t thinking hard enough about. The question isn’t whether to use GenAI for infrastructure. It’s who in your organization is responsible for making sure the conditions are right, and whether those people are positioned to do that effectively.

The answer, more often than not, is your senior engineers. And right now, many of them are being underused in exactly the ways that matter most.

AI generates code. It doesn’t understand your infrastructure.

There’s a subtle but critical distinction between an AI that can write IaC and an AI that understands your infrastructure.

GenAI models are trained to produce plausible output. They’re remarkably good at it. But plausible isn’t the same as correct, and correct isn’t the same as safe. A generated Terraform file can look perfectly reasonable while quietly violating your least-privilege access policies, depending on deprecated API versions, or leaving a storage bucket open to the public internet.

More dangerously, AI has no awareness of your operational context. It doesn’t know that your compliance framework prohibits certain instance types in production. It doesn’t know that your security team mandated a specific network isolation approach last quarter. It doesn’t know that what looks like a simple resource deletion might cascade through three dependent environments.

Your senior engineers know all of this. That institutional knowledge, accumulated through real incidents, architectural decisions, and hard-won operational experience, is exactly what makes human oversight irreplaceable.

The goal isn’t to slow AI down. It’s to direct it.

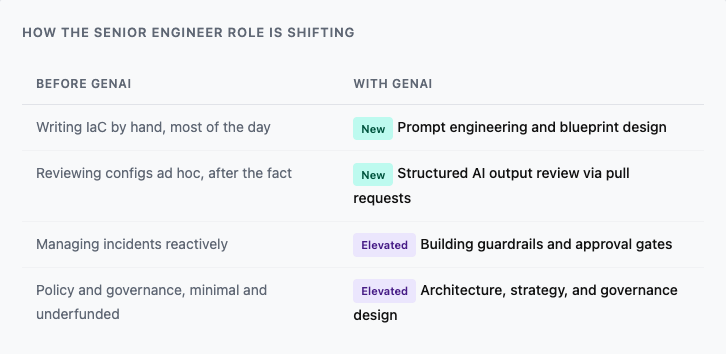

The new job description for senior infrastructure engineers

The role of senior infrastructure engineers isn’t shrinking in the age of GenAI. It’s shifting, toward higher-leverage work that AI genuinely can’t do.

Translating operational reality into precise prompts

The difference between a useful AI output and a dangerous one often comes down to the quality of the prompt. Compare these two:

“Create a Terraform file that provisions a new c8g.large AWS EC2 instance.”

“Create a Terraform file that provisions a new c8g.large AWS EC2 instance. Harden it with security best practices. Enable SSH only from IP 123.123.123.123. Restrict instance access to users with the ec2-users IAM role. Do not assign a public IP.”

The first prompt produces a functioning config. The second produces one that’s actually safe to deploy. The gap between them is engineering judgment, knowing what to ask for because you know what’s at stake if you don’t.

Senior engineers are the people who can build reusable prompt blueprints that encode your operational constraints, so developers across your organization can provision infrastructure safely without needing to think through every security implication themselves. Quali Torque makes this concrete: engineers define environment blueprints and natural-language prompts once, and the rest of the team uses them as a self-service interface, guardrails included.

Building the guardrails that let AI move fast safely

Speed without guardrails is how you get a capable AI agent doing exactly what it was told, and destroying 2.5 years of production data in the process.

Policy-as-Code, static analysis, and IaC testing frameworks are what let you actually trust AI-generated output, not because you’re checking it manually every time, but because automated controls are catching misconfigured resources before they reach your environments.

Configuring these systems requires the same depth of knowledge needed to vet AI output by hand: understanding of your cloud provider’s security model, familiarity with compliance requirements, experience integrating checks into CI/CD pipelines. This is senior engineer territory, and it’s where their investment in AI-era infrastructure pays the highest dividends.

Torque’s governance layer is built for exactly this. It gives engineers a robust policy authorship system, including AI-assisted policy generation, so that guardrails keep pace with the speed of AI-assisted provisioning.

Auditing what AI produces at critical moments

Even with strong prompts and automated checks in place, human review at key decision points remains essential. The most effective workflow isn’t humans reviewing every line of AI-generated code, it’s AI doing the heavy lifting and surfacing its work for human judgment at the right moments.

Torque operationalizes this directly: its AI Copilot can generate IaC configurations and open them as GitHub pull requests, putting AI output into the same review workflow your team already uses for human-authored code. Your senior engineers review, approve, and merge, with full visibility into what’s changing and why.

The governance gap that most teams are ignoring

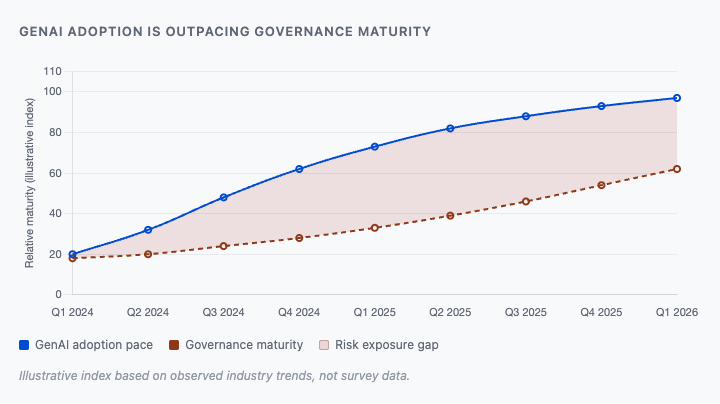

Here’s an uncomfortable truth: most teams adopting GenAI for infrastructure are moving faster than their governance practices can support.

Teams are generating IaC with AI assistants that have no awareness of internal policies. They’re deploying resources that haven’t been tested against compliance requirements. They’re granting AI agents permissions far broader than the tasks they’re performing. And they’re doing all of this without the approval gates and audit trails that would be standard practice for human-authored infrastructure changes.

This is the pattern behind real incidents, including the case we examined in depth where an experienced engineer, using a capable AI agent, lost 2.5 years of production data because the governance layer simply wasn’t there. It’s not a skill problem. It’s a systems problem.

Closing this gap is the highest-leverage infrastructure initiative most engineering organizations should be running right now. It means investing in Policy-as-Code frameworks. It means defining approval workflows that match the risk level of different infrastructure changes. It means treating AI-generated code with the same rigor as any other code entering your production environment.

And it means putting your senior engineers in charge of building those systems, not just using AI as a faster way to do what they were already doing.

Where this is heading: agentic AI with a human at the controls

The current state of GenAI for infrastructure, AI that generates and humans that review, is an early chapter, not the final one.

Agentic AI systems are already beginning to operate more like collaborative team members than code generators. They can monitor logs and infrastructure state in real time, flag anomalies before they become incidents, and take autonomous action on well-defined, lower-risk tasks. The scope of what AI can safely handle autonomously will expand as both the models and the governance frameworks around them mature.

Torque is already moving in this direction. Its AI Copilot can perform real-time analysis of logs, metrics, and infrastructure state, giving it the contextual awareness to generate configurations that are closely aligned with what’s actually running in your environments, rather than generic best-practice templates. It doesn’t need to be prompted with context that it can observe directly.

But the key phrase is supervised autonomy. Agentic doesn’t mean autonomous. The engineers who understand your infrastructure deeply enough to define the boundaries of that autonomy, and to intervene when AI operates outside them, will be the most valuable people in your organization as this shift unfolds.

The future of infrastructure engineering isn’t humans versus AI. It’s humans deciding what AI is and isn’t allowed to do, and building the systems that enforce those decisions at speed and scale.

Getting started: what to do before your next deployment

If your team is already using GenAI for infrastructure, or planning to, here’s where to focus first.

Audit your current AI usage for governance gaps

Are AI-generated configs going through the same review process as human-authored ones? Do your AI tools have any awareness of your internal policies? Are you operating with least-privilege access for AI agents? Most teams will find gaps here that are worth closing urgently.

Invest in blueprint-driven prompt engineering

Don’t let every developer start from a blank prompt. Build a library of validated prompt blueprints that encode your security and operational constraints, and make them the default starting point for common provisioning tasks. In Torque, this is a first-class feature, blueprints and AI prompts are designed to be reused and governed centrally.

Implement Policy-as-Code before you need it

The right time to build guardrails isn’t after an incident. Define your policies, integrate them into your deployment pipeline, and test them against AI-generated output regularly. Torque’s governance system makes this significantly more accessible, including AI-assisted policy authorship for teams building these practices from scratch.

Keep senior engineers close to the AI layer

Resist the temptation to treat GenAI as a way to reduce senior engineering involvement in infrastructure decisions. The opposite is true. Their expertise is what turns AI from a liability into a genuine accelerant.

The bottom line

GenAI is the most significant shift in infrastructure operations in a decade. The teams that get the most out of it won’t be the ones who automate the fastest, they’ll be the ones who pair AI capability with the human judgment needed to direct it safely.

Your senior engineers aren’t being replaced by AI. They’re being asked to do something harder and more valuable: define the rules that AI operates within, audit the output it produces, and build the governance frameworks that let the rest of your organization move fast without breaking things.

Quali Torque is a purpose-built for this model, giving engineering teams agentic AI that works within guardrails, surfaces its work for human review, and develops the contextual awareness to become genuinely more capable over time.

The question isn’t whether to bring AI into your infrastructure workflows. It’s whether you’re building the human and governance infrastructure to use it safely.

To see Torque in action, visit the Torque playground, start your 30-day trial or book a live demo focused on SRE and platform use cases to see how Torque plugs into your existing pipelines and tooling.