Run and Govern

AI Workloads at Scale

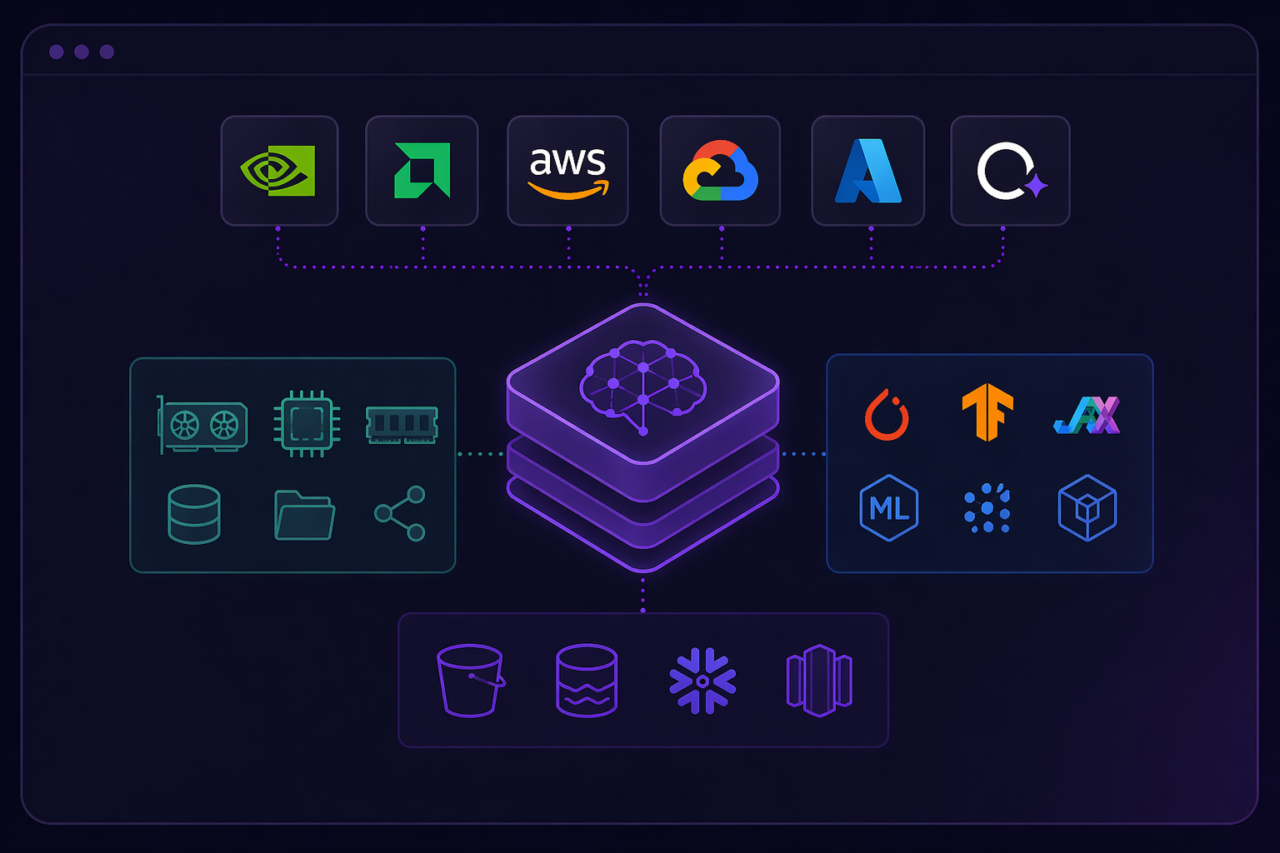

AI workloads, from model stacks to training and runtime services, are orchestrated as complete, governed environments across GPU infrastructure.

Is Your Infrastructure Ready for

How AI Actually Works?

AI workloads require tightly coupled environments that most infrastructure platforms were never designed to manage or govern

AI stacks are not standard infrastructure

Model weights, GPU compute, frameworks, and data pipelines are tightly coupled. Most platforms treat them as separate resources and leave teams to wire them together manually, every time.

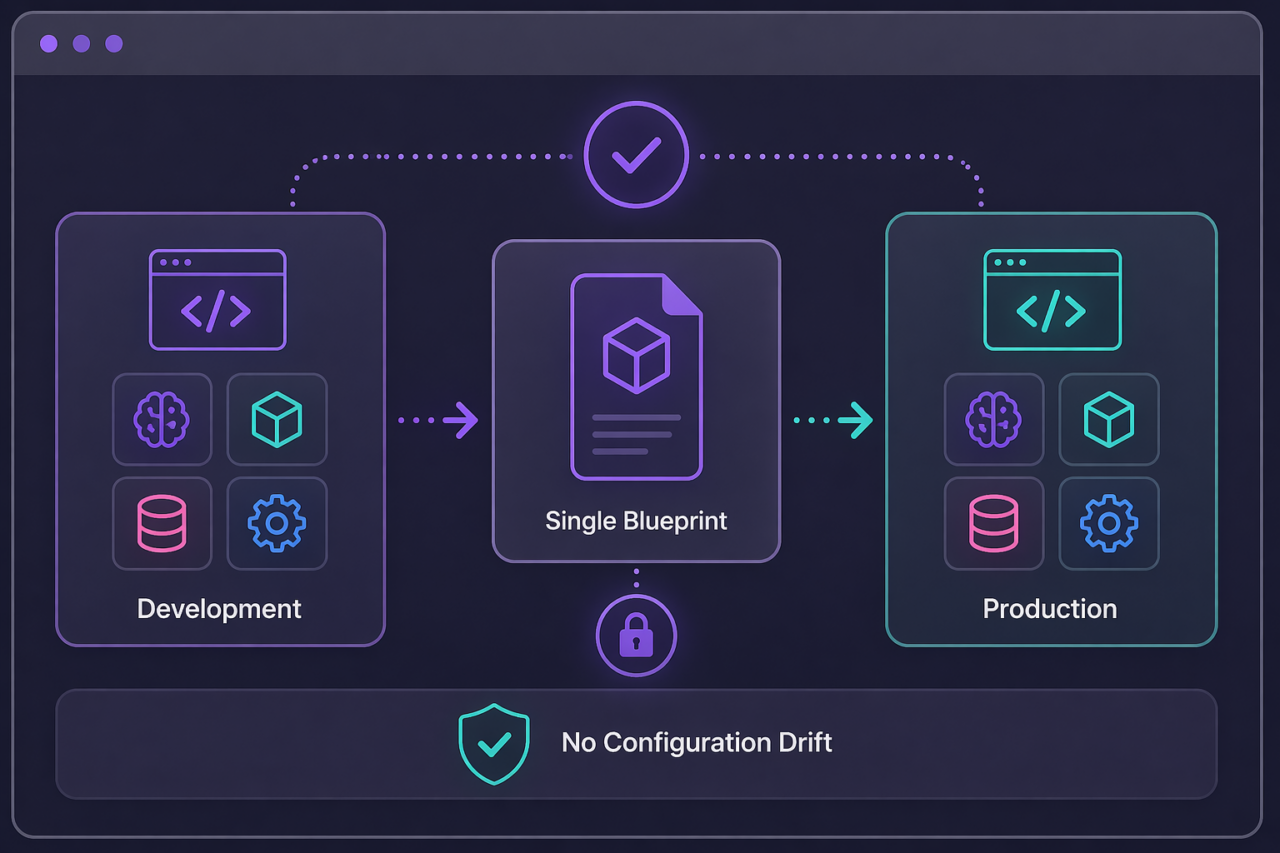

Training and production environments diverge

Without identical, governed environments at every stage, reproducibility is not guaranteed. It is assumed.

AI infrastructure cost does not follow predictable patterns

GPU clusters scale fast. Training runs overrun. Monthly budgets and quarterly reviews are the wrong instruments for this problem.

Torque runs & governs your AI workloads

Define AI environments including model frameworks, dependencies, and infrastructure

The full stack, GPU compute, model weights, frameworks, data services, is defined as a single environment blueprint. Not as separate components assembled by hand each time.

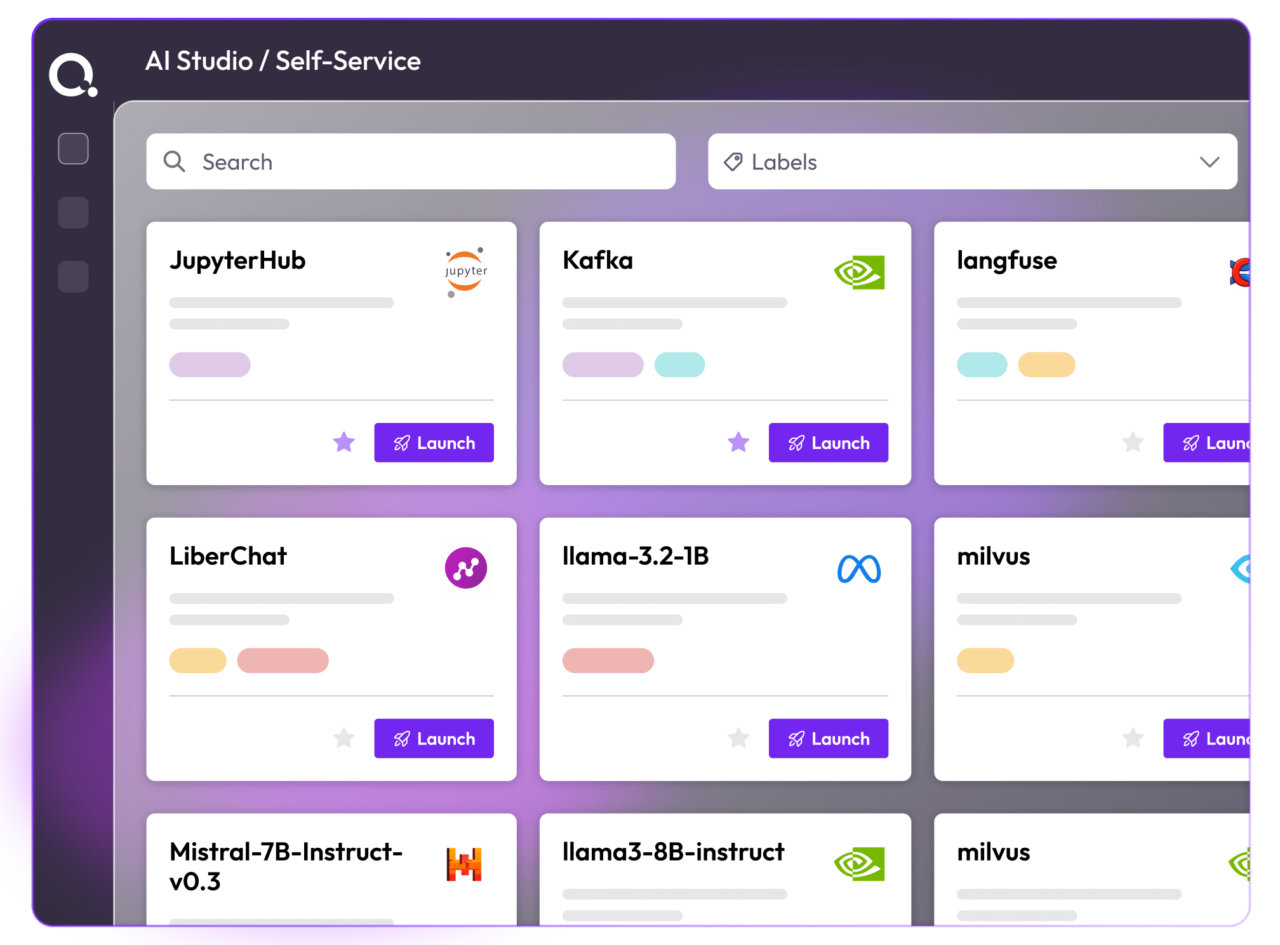

Provision GPU-backed environments on demand

Teams request AI environments through a self-service catalog. Torque provisions the complete stack, with GPU resources, access controls, and cost policies already in place. No infrastructure expertise required.

Execute, scale, and manage workloads with full lifecycle control

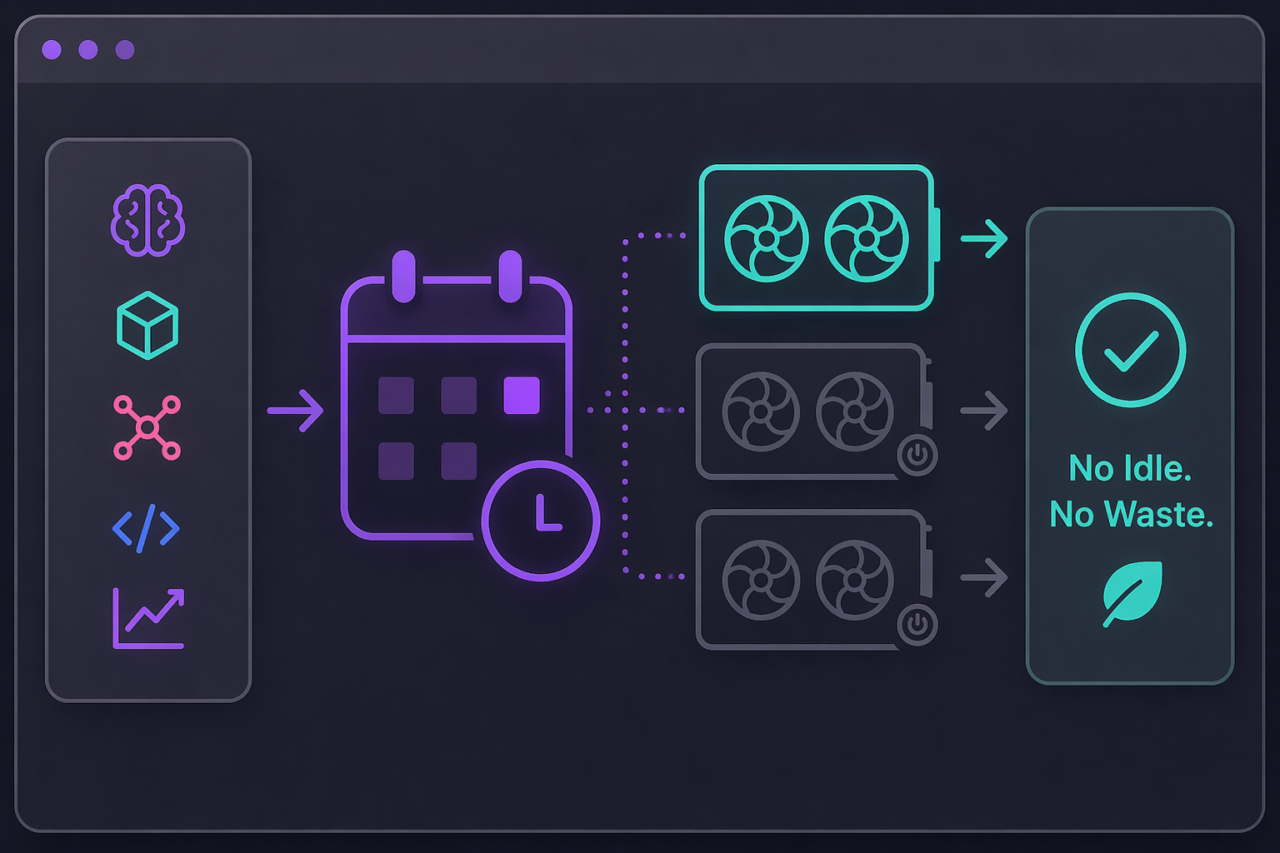

Torque monitors every running AI environment. Compute scales to match workload demand. When a training run completes, the environment shuts down. Nothing runs longer than it should.

How AI Workloads Are Governed

AI Environment Orchestration

GPU compute, model weights, frameworks, and data services defined as a single governed blueprint, not assembled manually each time. Every dependency captured, every component versioned, and the full stack available on demand.

GPU Resource Optimization

Workloads are scheduled dynamically to match actual demand. Capacity scales up for training and shuts off when the job completes. Nothing idles. Nothing accumulates cost while nobody is watching.

Consistent Execution Across Stages

Training and inference environments provisioned from the same blueprint. What ran in development is exactly what runs in production. Configuration drift between stages is eliminated structurally, not managed manually.

Workload Lifecycle Management

Every AI environment monitored from launch to decommission. Drift detected continuously. Environments scale with demand and shut down automatically when work completes. Operational overhead is removed from the teams running the workloads.

Self-Service AI Environments

Teams describe what they need in plain language. Torque provisions the complete environment, GPU resources, runtime, access controls, and cost policies already in place. No infrastructure expertise required. No waiting.

Policy & Cost Governance

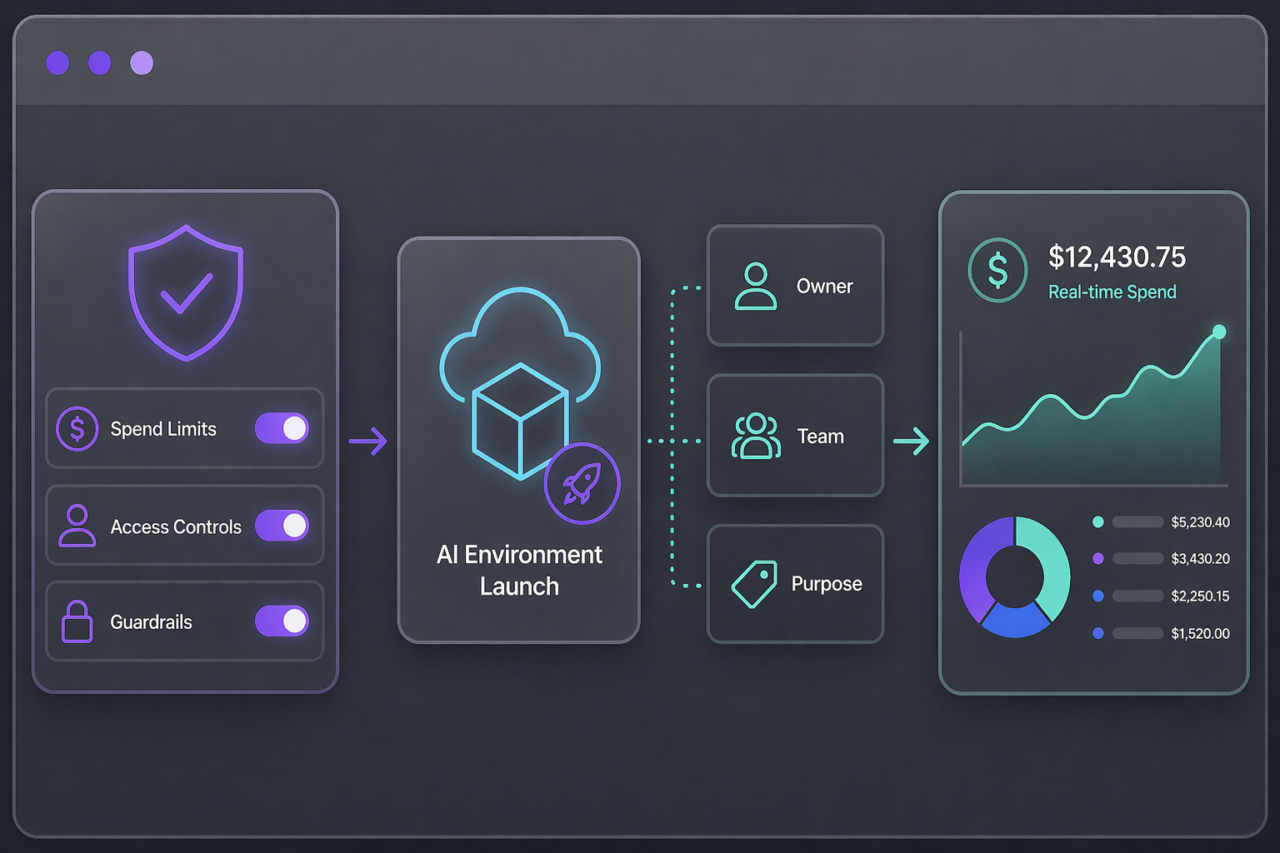

Spend limits and access controls enforced before any AI environment launches. Every workload tagged at creation with owner, team, and purpose. Cost attributed in real time, not reconciled at month end.

Supported Ecosystems

GPU Platforms | Kubernetes | AI/ML Frameworks | Cloud & Hybrid AI Environments

Watch a Demo to See How Quali Supports NVAIE

Watch this video to see how Torque manages each layer of the tech stack support Agentic AI solutions.

Value & Impact

AI workloads delivered as consistent, repeatable environments

Reduced time to provision GPU infrastructure

Improved GPU utilization and reduced idle cost

Reliable execution across training and production environments

Browse Documentation on Torque’s Support for AI Workloads

Frequently Asked Questions

AI workloads depend on tightly coupled stacks, compute, models, frameworks, and data. Treating them as separate resources creates inconsistency and operational overhead.

Both are defined as the same environment blueprint. What runs in development is exactly what runs in production, no drift.

Environments scale with demand and shut down automatically when work completes. Nothing runs longer than it should.

Try it yourself

Explore Torque in a live playground

No installation. No configuration. Launch a fully governed environment in minutes and see how Torque discovers, normalizes, and controls infrastructure across your technology stack.

Pre-loaded blueprints across IaC, containers, and GPU infrastructure, ready to launch in one click.

Real governed environments that provision, enforce policy, and tear down automatically.

No credentials required, explore the full platform experience without connecting your own cloud.

Live cost and drift tracking so you can see the governance layer in action from day one.

Want to understand how Torque can transform your business?

Talk to our team and see what governed infrastructure looks like in your environment.